Bazel 非常复杂,在构建过程中会执行许多不同的操作,其中一些操作可能会影响构建性能。本页面尝试将其中一些 Bazel 概念与其对构建性能的影响相关联。虽然内容并不全面,但我们提供了一些示例,说明如何通过提取指标来检测构建性能问题,以及如何解决这些问题。希望您在调查构建性能回归问题时能够应用这些概念。

完全构建与增量构建

完全构建是指从头开始构建所有内容,而增量构建则会重复使用一些已完成的工作。

我们建议您分别查看完全构建和增量构建,尤其是在 收集 / 汇总依赖于 Bazel 缓存状态的指标(例如 构建请求大小指标 )时。它们还代表两种不同的用户体验。与从头开始完全构建(由于缓存冷启动而需要更长时间)相比,增量构建的发生频率要高得多,因为开发者会迭代代码(通常速度更快,因为缓存通常已经预热)。

您可以使用 BEP 中的 CumulativeMetrics.num_analyses 字段对构建进行分类。如果 num_analyses <= 1,则为完全构建;否则,我们可以大致

将其归类为可能是增量构建 - 用户可能已切换

到不同的标志或不同的目标,从而导致实际的完全构建。任何更严格的增量定义都可能需要采用启发式方法,例如查看加载的软件包数量 (PackageMetrics.packages_loaded)。

确定性构建指标作为构建性能的代理

由于某些指标(例如 Bazel 的 CPU 时间或远程集群上的队列时间)的不确定性,衡量构建性能可能很困难。因此,使用确定性指标作为 Bazel 完成的工作量的代理可能很有用,而这反过来会影响其性能。

构建请求的大小可能会对构建性能产生重大影响。较大的构建可能表示在分析和构建构建图方面需要更多工作。随着开发工作的进行,构建的自然增长是不可避免的,因为会添加/创建更多依赖项,因此复杂性会增加,构建成本也会更高。

我们可以将此问题分解为各个构建阶段,并使用以下指标作为每个阶段完成的工作的代理指标:

PackageMetrics.packages_loaded:成功加载的软件包数量。 此处的回归表示在加载阶段需要完成更多工作来读取和解析每个额外的 BUILD 文件。TargetMetrics.targets_configured:表示在构建中配置的目标和方面数量。回归表示在构建和遍历已配置的目标图方面需要更多工作。- 这通常是由于添加了依赖项并且必须构建其传递闭包的图所致。

- 使用 cquery 查找可能添加了新 依赖项的位置。

ActionSummary.actions_created:表示在构建中创建的操作,回归表示在构建操作图方面需要更多工作。请注意,这还包括可能未执行的未使用操作。- 使用 aquery 调试回归;

我们建议先使用

--output=summary,然后再使用--skyframe_state进一步深入分析。

- 使用 aquery 调试回归;

我们建议先使用

ActionSummary.actions_executed:执行的操作数量,回归直接表示在执行这些操作方面需要更多工作。- BEP 会写出操作统计信息

ActionData,其中显示了执行次数最多的操作类型。默认情况下,它 会收集前 20 种操作类型,但您可以传入--experimental_record_metrics_for_all_mnemonics,以便为所有已执行的操作类型收集此数据。 - 这应该有助于您了解执行了哪些(额外的)操作。

- BEP 会写出操作统计信息

BuildGraphSummary.outputArtifactCount:执行的操作创建的工件数量。- 如果执行的操作数量没有增加,则可能是规则实现发生了更改。

这些指标都受本地缓存状态的影响,因此您需要确保提取这些指标的构建是完全构建 。

我们注意到,这些指标中的任何一个出现回归都可能伴随着实际用时、CPU 时间和内存用量的回归。

本地资源的使用情况

Bazel 会消耗本地计算机上的各种资源(用于分析构建图和驱动执行,以及运行本地操作),这可能会影响计算机在执行构建和其他任务时的性能 / 可用性。

所用时间

最容易受到干扰(并且在不同构建之间差异很大)的指标可能是时间;特别是实际用时、CPU 时间和系统时间。您可以使用 bazel-bench 获取这些指标的基准,并且使用足够多的 --runs,您可以提高测量的统计显著性。

实际用时 是实际经过的时间。

- 如果仅实际用时出现回归,我们建议收集 JSON 跟踪配置文件并查找 差异。否则,调查其他回归指标可能会更有效,因为它们可能会影响实际时间。

CPU 时间 是 CPU 执行用户代码所花费的时间。

- 如果 CPU 时间在两个项目提交之间出现回归,我们建议收集 Starlark CPU 配置文件。您可能还应使用

--nobuild将构建限制为分析阶段,因为这是完成大部分 CPU 密集型工作的地方。

- 如果 CPU 时间在两个项目提交之间出现回归,我们建议收集 Starlark CPU 配置文件。您可能还应使用

系统时间是 CPU 在内核中花费的时间。

- 如果系统时间出现回归,则主要与 Bazel 从文件系统中读取文件时的 I/O 相关。

系统级负载分析

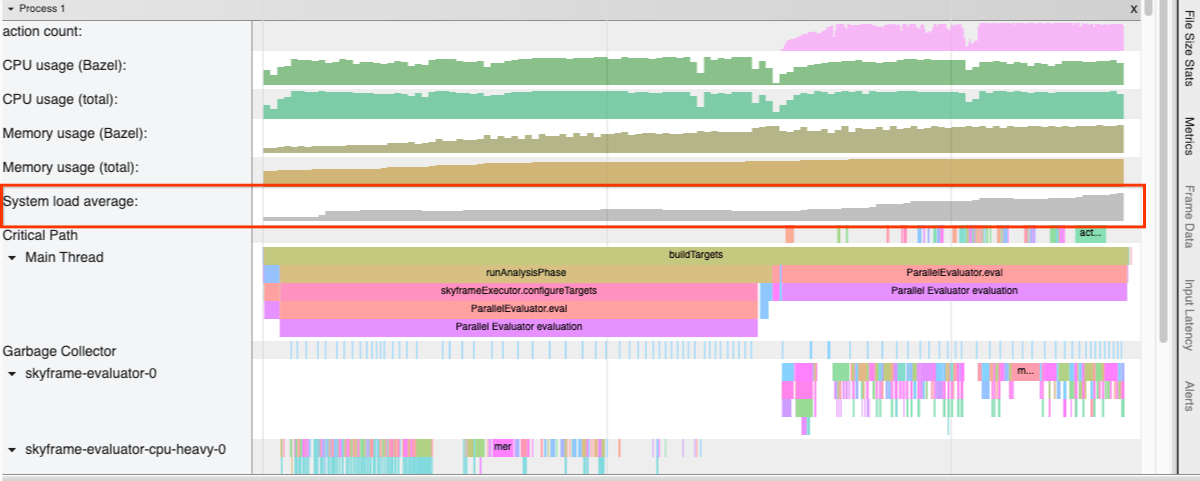

使用 Bazel 6.0 中引入的

--experimental_collect_load_average_in_profiler

标志,

JSON 跟踪分析器会在调用期间收集

系统负载平均值。

图 1. 包含系统负载平均值的配置文件。

Bazel 调用期间的高负载可能表明 Bazel 为您的计算机并行调度了过多的本地操作。您可能需要考虑

调整

--local_cpu_resources

和 --local_ram_resources,

尤其是在容器环境中(至少在

#16512 合并之前)。

监控 Bazel 内存用量

获取 Bazel 内存用量的主要来源有两个:Bazel info 和

BEP。

bazel info used-heap-size-after-gc:调用System.gc()后使用的内存量(以字节为单位)。- Bazel bench 也为此指标提供了基准。

- 此外,还有

peak-heap-size、max-heap-size、used-heap-size和committed-heap-size(请参阅 文档),但相关性较低。

BEP 的

MemoryMetrics.peak_post_gc_heap_size:GC 后 JVM 堆大小峰值的大小(以 字节为单位)(需要设置--memory_profile,该设置会尝试强制执行完整 GC)。

内存用量回归通常是 构建请求大小指标回归的结果, 而构建请求大小指标回归通常是由于添加了依赖项或规则 实现发生了更改所致。

如需更精细地分析 Bazel 的内存占用情况,我们建议使用 内置内存分析器 规则。

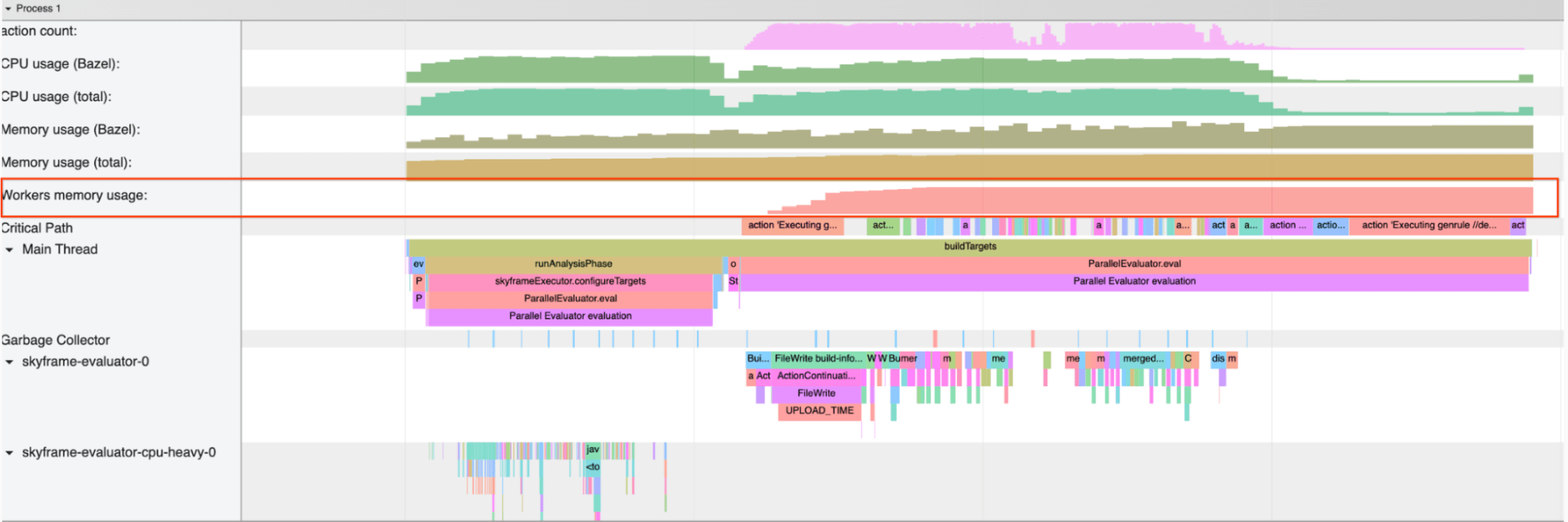

持久工作器的内存分析

虽然 持久工作器 可以显著加快构建速度

(尤其是对于解释型语言),但其内存占用情况可能

会成为问题。Bazel 会收集有关其工作器的指标,特别是 WorkerMetrics.WorkerStats.worker_memory_in_kb 字段会告知工作器使用的内存量(按助记符)。

JSON 跟踪分析器还

会在调用期间收集持久工作器内存用量,方法是传入

--experimental_collect_system_network_usage

标志(Bazel 6.0 中的新标志)。

图 2. 包含工作器内存用量的配置文件。

降低

--worker_max_instances

的值(默认值为 4)可能有助于减少

持久工作器使用的内存量。我们正在积极努力使 Bazel 的资源管理器和调度器更加智能,以便将来减少此类微调的需求。

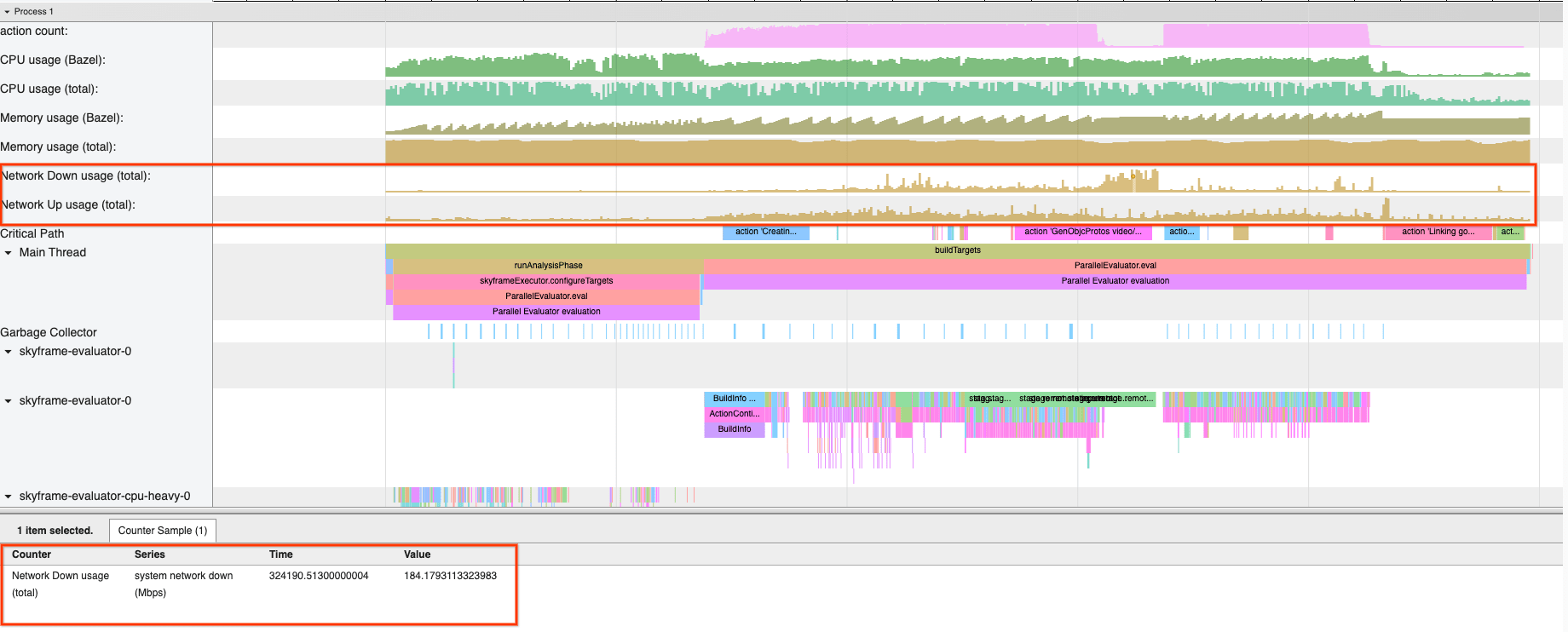

监控远程构建的网络流量

在远程执行中,Bazel 会下载执行操作后构建的制品。因此,您的网络带宽可能会影响构建的性能。

此外,JSON 跟踪配置文件

还允许您通过传入 --experimental_collect_system_network_usage 标志(Bazel

6.0 中的新标志)来查看整个构建过程中的系统级网络用量。

图 3. 包含系统级网络用量的配置文件。

使用远程执行时,如果网络用量较高但相对平稳,则可能表明网络是构建中的瓶颈;如果您尚未启用“Build without the Bytes”,请考虑传入

--remote_download_minimal来启用它。这将通过避免下载不必要的中间工件来加快构建速度。

另一种方法是配置本地 磁盘缓存以节省 下载带宽。